WordPress in AWS – the Real World

This article outlines one way to wire up WordPress AT SCALE. In the real world, there can be separate and distinct development, staging and production environments. So how should we move information between them, and how should this system work?

Here we take the AWS CodeDeploy/WordPress example to the next level. Standard disclaimer – there are many approaches to this, so adjust to suit your needs. There is room for improvement, so feel free to comment!

In our scenario we have three environments:

- Development environments – The dev team handles theme development, as well as configuration management. So these environments live on their local machines.

- Staging – Any functional changes are tested here before being “elevated” to Production. The development team manages the push of code to this environment.

- Production – Content authors make changes directly to a Production instance. Once functional changes are tested in Staging, the Production Manager can deploy them here. Content approval may be implemented by means of a plugin if desired.

Our overarching challenge is that WordPress stores its content in two ways: In its database and on disk. We need to handle deployment of the WordPress PHP code, themes and any plugins in use. We also want the ability to see all content in any environment.

Overall Architecture

The site runs on multiple EC2 instances which are situated behind an Application Load Balancer (ALB). CloudFront provides caching services, and a web application firewall (WAF) is attached. As typical in these scenarios, DNS is set up so that mysite.com and www.mysite.com point to CloudFront, and origin.mysite.com is set up to point to the ALB.

Source Control

This is an important place to start. We have the ENTIRE WordPress code base checked into git, using AWS CodeCommit. You might also consider using GitHub due to its unique CodeDeploy integration features.

This means that the dev team is responsible for updating WordPress and plugins. They are updated in a dev environment, checked into the code repository, and deployed to production. In addition, activation of of plugins must be scripted, for when new instances come online (see wordpress command line, below).

AWS CodeDeploy

The code in our source control is deployed using AWS CodeDeploy. This allows us to deploy any changes into a somewhat vanilla AMI. I say “somewhat” because an instance running CodeDeploy requires two things: An agent and a role with specific permissions.

We’ve set up a CodeDeploy Application and a Deployment Group for each environment. In this case we’ll name them my-wordpress, dg-staging and dg-production.

Store configuration files in S3

Our deployment process contains some code that downloads the appropriate config file, depending on the environment. CodeDeploy makes the deployment group name available for our use.

#copy the appropriate config file from s3

if [ "$DEPLOYMENT_GROUP_NAME" == "dg-staging" ]; then

CONFIG_LOCATION=s3://my-bucket/my-wordpress/staging

elif [ "$DEPLOYMENT_GROUP_NAME" == "dg-production" ]; then

CONFIG_LOCATION=s3://my-bucket/my-wordpress/production

else

echo "Unrecognized deployment group name: $DEPLOYMENT_GROUP_NAME. Bailing out."

return 1

fi

aws s3 cp $CONFIG_LOCATION/wp-config.php /var/www/html/.

Make sure to add s3 read bucket permission to the role used by your servers.

The WordPress Command Line

Any WordPress plugins or scripts must be added or activated using the wp-cli, because we must consider new instances which are being added (or replacing terminated instances). The wp-cli is installed using CodeDeploy’s BeforeInstall hook, and enables us to make changes to WordPress using the AfterInstall hook.

Offload WordPress media files

In a single-instance install, WordPress stores uploaded files locally, but we want to be able to see all authored content in all environments! In order to avoid having to move content between instances we use a plugin which transparently uses S3 to store media files. Here’s what that install looks like in the deployment script:

#Addtional libraries for WP Offload S3 Lite wp plugin install amazon-web-services --activate --allow-root #WP Offload S3 Lite wp plugin install amazon-s3-and-cloudfront --activate --allow-root

Database backup and movement

AWS simplifies the task of creating a high availability database by making RDS available to us. We use separate databases for each environment. We’d like to implement two additional features:

- Some sort of periodic database backup. As unlikely as RDS is to fail, we can also use this to roll back the database to a point in time if necessary.

- A way to “push” the production database to staging or the development environments. If content managers are working through the Production server, we’ll have to do this to keep them in sync.

Protecting the Origin from public access using AWS WAF

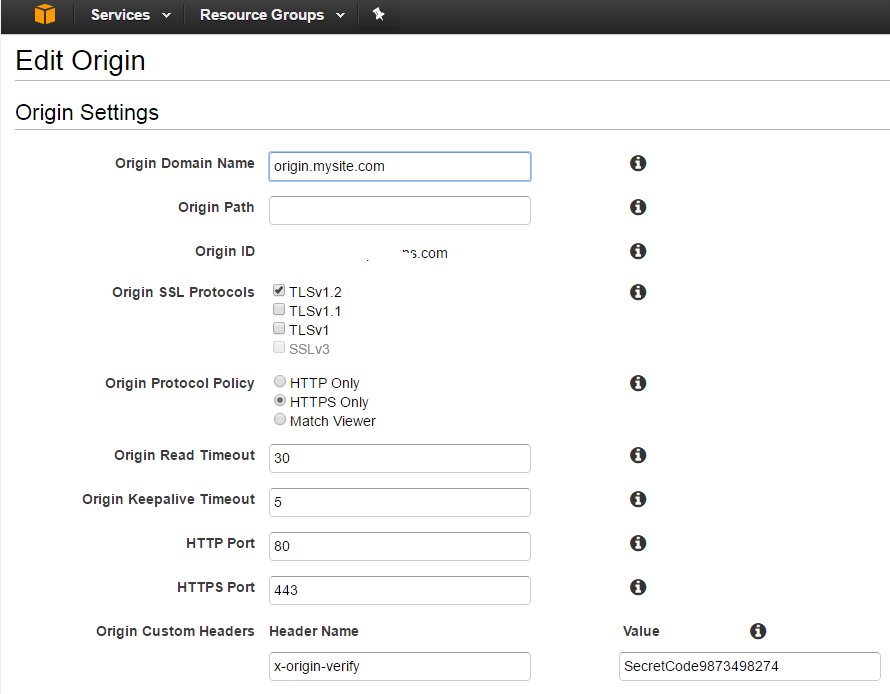

In our scenario, we needed a way to make the origin server inaccessible to the general public, while allowing content editors direct access to it. A Web Application Firewall (AWS WAF) is attached to the ALB. The WAF is set up so that ONE OF THE FOLLOWING rules must be satisfied for entry. All other rules are denied:

- For editors: There is a rule to allow internal company ip’s. This can be modified as needed.

- For CloudFront: A verified-origin rule identifies these requests, based on a header which goes with all requests. Below is an example of using CURL to add the header to simulate a cloudfront request

- Any other special exceptions.

The second of these rules can be tested as follows (using an EXTERNAL) ip:

curl -sLIXGET -H 'x-origin-verify: SecretCode9873498274'

Here’s what it looks like from CloudFront:

Plugins

The following plugins are used to support various aspects of scalability.

- C3 Cloudfront Cache Controller – invalidates cloudfront as needed, when content is edited.

- ManageWP – this tool does a LOT, but is used in our case for convenience when copying the production database to dev to achieve consistency.

- WP Offload S3 Lite – WordPress media files (like images) are stored in S3, so they can be accessed from all instances.

- WP BASIC Auth – to protect the dev environment from general visibility.